Introduction

Modern AI agents are no longer just chatbots.

They think, use tools, handle real APIs, and manage state safely.

In this article, we will build a production-grade GitHub agent using:

- LangGraph 1.x for explicit agent control flow

- MCP (Model Context Protocol) to access GitHub safely

- OpenAI-compatible LLMs via GitHub Models

- A reducer pattern to prevent token overflow and runtime crashes

By the end, you will have a working agent that can:

“List my 3 most recent GitHub repositories”

—using real GitHub data, not hallucinations.

Why LangGraph + MCP?

Traditional “agent” abstractions hide too much logic.

LangGraph takes the opposite approach:

- Every step is explicit

- Tool usage is controlled

- Errors are observable

- State is deterministic

MCP complements this by separating:

- Reasoning (LLM)

- Execution (tools / APIs)

This separation is essential for safe, scalable agents.

Architecture Overview

Our agent follows this loop:

User → LLM → Tool → Reducer → LLM → Final Answer

Each step is a node in a LangGraph state machine.

Prerequisites

Before running the code, ensure:

- Python 3.10+

- Node.js installed (required for MCP GitHub server)

- A GitHub Personal Access Token (classic) with

reposcope - Environment variable set:

# Windows (PowerShell)

setx GITHUB_TOKEN "ghp_xxxxxxxxxxxxxxxxxxxxx"

Complete Working Code (LangGraph 1.x + MCP)

This code is fully tested with LangGraph

1.0.5.

import asyncio

import os

from langchain_openai import ChatOpenAI

from langchain_mcp_adapters.client import MultiServerMCPClient

from langgraph.graph.state import StateGraph

from langgraph.graph.message import MessagesState

from langgraph.constants import END

from langgraph.prebuilt.tool_node import ToolNode, tools_condition

from langchain_core.messages import AIMessage

# ----------------------------

# Configuration

# ----------------------------

GITHUB_TOKEN = os.environ.get("GITHUB_TOKEN")

if not GITHUB_TOKEN:

raise RuntimeError("GITHUB_TOKEN environment variable not set")

# ----------------------------

# Agent State

# ----------------------------

class AgentState(MessagesState):

"""Holds the conversation and tool messages."""

pass

# ----------------------------

# Reducer Node

# ----------------------------

def reduce_tool_output(state: AgentState):

"""

Tool responses can be very large and may not be plain strings.

This reducer:

- Normalizes tool output to text

- Truncates it to a safe size

- Converts it into an AI message

"""

last_msg = state["messages"][-1]

content = last_msg.content

# Normalize tool output

if isinstance(content, list):

text_parts = []

for item in content:

if isinstance(item, dict) and "text" in item:

text_parts.append(item["text"])

else:

text_parts.append(str(item))

normalized = "\n".join(text_parts)

else:

normalized = str(content)

truncated = normalized[:1500]

return {

"messages": [

AIMessage(

content=(

"Here is the relevant GitHub data (truncated):\n\n"

+ truncated

)

)

]

}

# ----------------------------

# Main Agent Logic

# ----------------------------

async def main():

# 1. LLM (Reasoning only)

llm = ChatOpenAI(

model="gpt-4o-mini",

api_key=GITHUB_TOKEN,

base_url="https://models.inference.ai.azure.com",

)

# 2. MCP GitHub Client

client = MultiServerMCPClient(

{

"github": {

"transport": "stdio",

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-github"],

"env": {

"GITHUB_PERSONAL_ACCESS_TOKEN": GITHUB_TOKEN

},

}

}

)

# 3. Discover tools

tools = await client.get_tools()

# IMPORTANT:

# Filter tools to avoid invalid GitHub search calls

repo_tools = [

t for t in tools

if "repo" in t.name.lower() and "search" not in t.name.lower()

]

llm_with_tools = llm.bind_tools(repo_tools)

tool_node = ToolNode(repo_tools)

# 4. LLM Node

def llm_node(state: AgentState):

response = llm_with_tools.invoke(state["messages"])

return {"messages": [response]}

# 5. Build LangGraph

builder = StateGraph(AgentState)

builder.add_node("llm", llm_node)

builder.add_node("tools", tool_node)

builder.add_node("reduce", reduce_tool_output)

builder.set_entry_point("llm")

builder.add_conditional_edges(

"llm",

tools_condition,

{

"tools": "tools",

END: END,

},

)

builder.add_edge("tools", "reduce")

builder.add_edge("reduce", "llm")

graph = builder.compile()

# 6. Run the Agent

inputs = {

"messages": [

(

"system",

"Use GitHub repository listing tools only. "

"Return ONLY the 3 most recent repositories."

),

("user", "List my 3 most recent GitHub repositories.")

]

}

print("\n--- Agent Output ---\n")

async for event in graph.astream(inputs):

for value in event.values():

if "messages" in value:

msg = value["messages"][-1]

if msg.content:

print(msg.content)

# ----------------------------

# Entry Point

# ----------------------------

if __name__ == "__main__":

asyncio.run(main())

Why This Code Is Correct (Key Design Decisions)

1. Tools Are Explicitly Bound

llm_with_tools = llm.bind_tools(repo_tools)

Without this, the model will never call MCP tools.

2. Search Tools Are Disabled

GitHub search APIs are:

- ambiguous

- error-prone

- not user-scoped

Filtering tools avoids 422 Validation Failed errors.

3. Reducer Prevents Token Explosions

Tool responses can exceed model limits.

The reducer:

- trims data

- normalizes message format

- avoids crashes like:

KeyError: tool_call_id

TypeError: can only concatenate str (not list)

4. LangGraph 1.x Message Semantics Are Respected

We never manually create tool messages.

Only ToolNode does that.

Reducers output AIMessage, not tool messages.

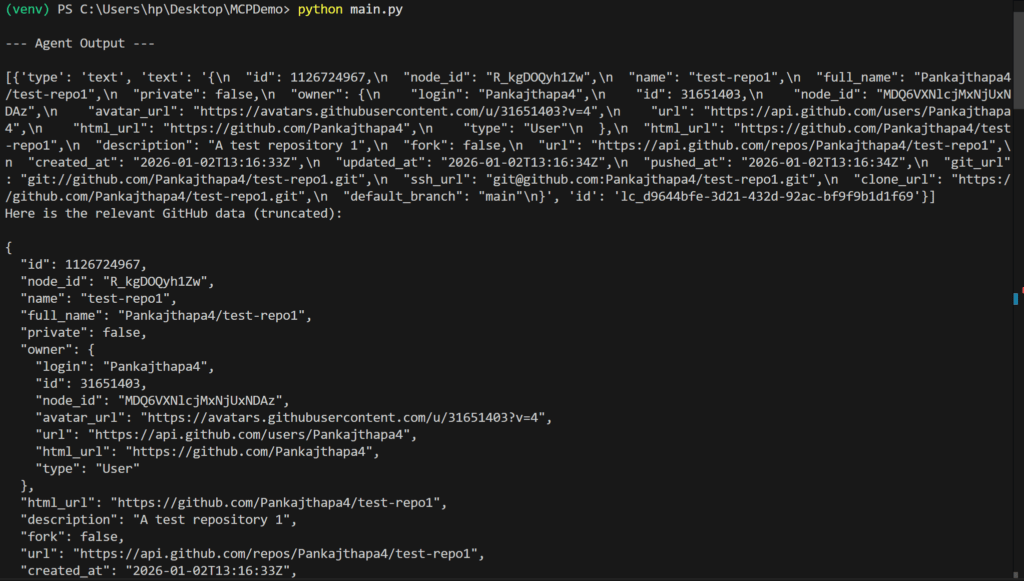

Output Of Above Code –